旅游网站网页设计报告wordpress标签管理插件

las数据转pcd数据

- 一、算法原理

- 1.介绍`las`

- 2.主要函数

- 二、代码

- 三、结果展示

- 3.1 `las`数据

- 3.2 `las`转换为`pcd`

- 四、相关数据链接

一、算法原理

1.介绍las

LAS文件按每条扫描线排列方式存放数据,包括激光点的三维坐标、多次回波信息、强度信息、扫描角度、分类信息、飞行航带信息、飞行姿态信息、项目信息、GPS信息、数据点颜色信息等。LAS格式定义中用到的数据类型遵循1999年ANSI(AmericanNationalStandardsInstitute,美国国家标准化协会)C语言标准。

2.主要函数

inFile = np.vstack((las.x, las.y, las.z)).transpose() # 转换为ndarray

二、代码

import laspy

import numpy as np

import open3d as o3ddef las2pcd_rel(lasfile):las = laspy.read(lasfile) # 读取点云inFile = np.vstack((las.x, las.y, las.z)).transpose() # 转换为ndarray# 将numpy转换为点云文件pcd = o3d.geometry.PointCloud()pcd.points = o3d.utility.Vector3dVector(inFile)o3d.visualization.draw_geometries([pcd])return pcdif __name__ == '__main__':path = 'res/城市路面.las'las2pcd_rel(path)

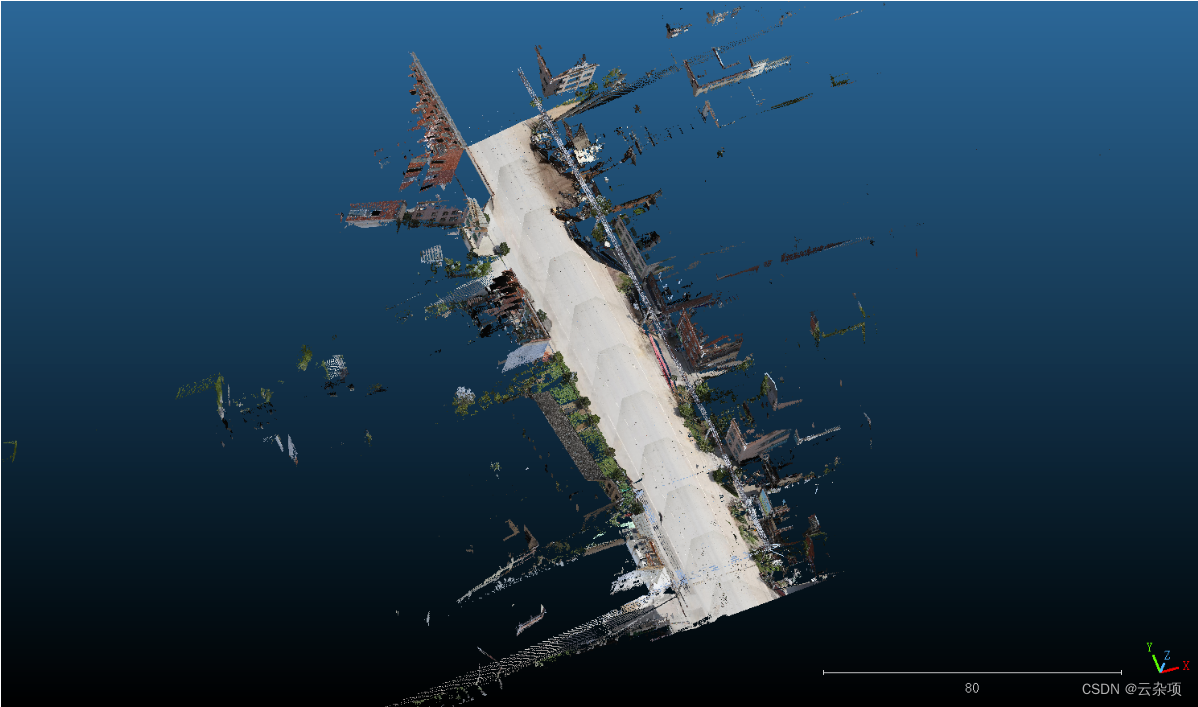

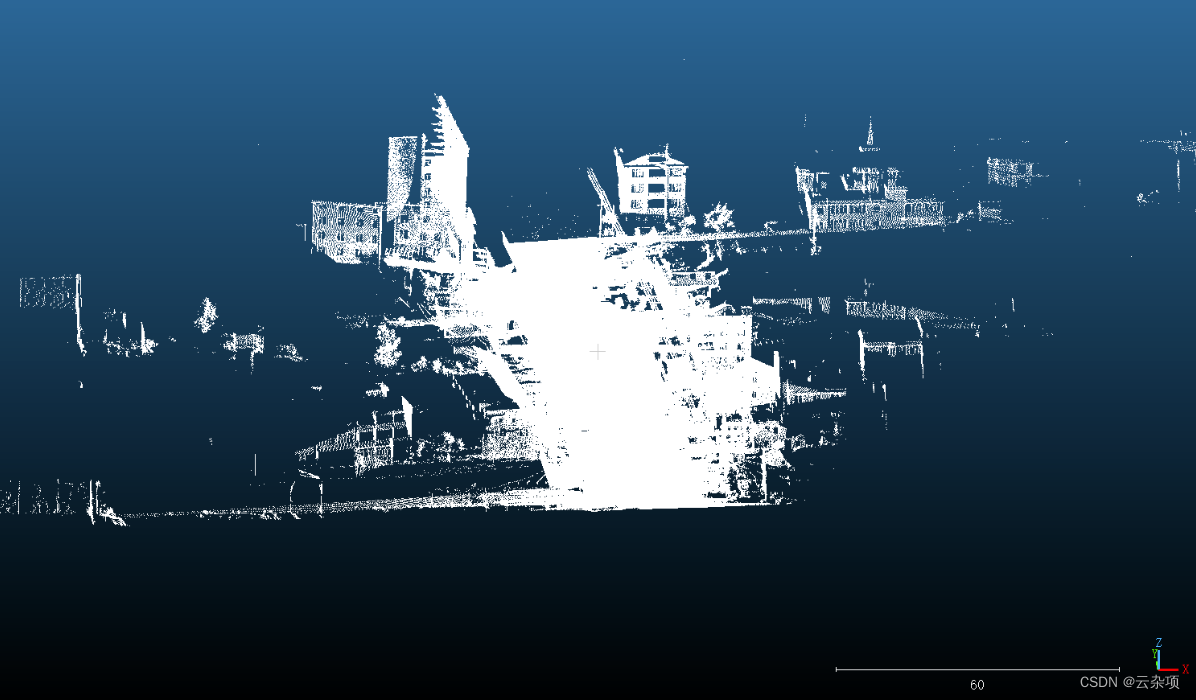

三、结果展示

3.1 las数据

3.2 las转换为pcd

四、相关数据链接

百度网盘数据集:

包括 obj,pcd,las,png,ply等

百度网盘链接:https://pan.baidu.com/s/1JFxKUk_xMcEmpfBHtuC-Pg

提取码:cpev