外网进入学校内局域网建设的网站网站建设的关键细节

一套医院云his系统源码 采用前后端分离架构,前端由Angular语言、JavaScript开发;后端使用Java语言开发。融合B/S版电子病历系统,支持电子病历四级,HIS与电子病历系统均拥有自主知识产权。

文末卡片获取联系!

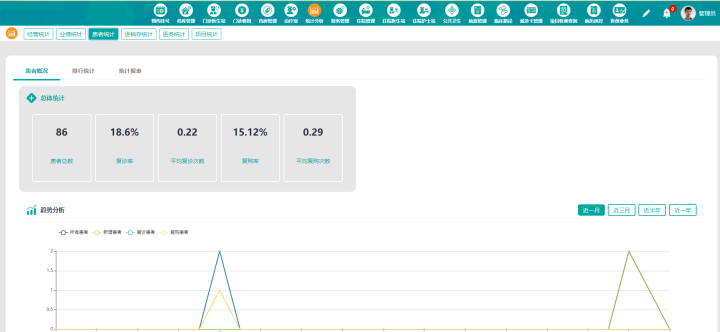

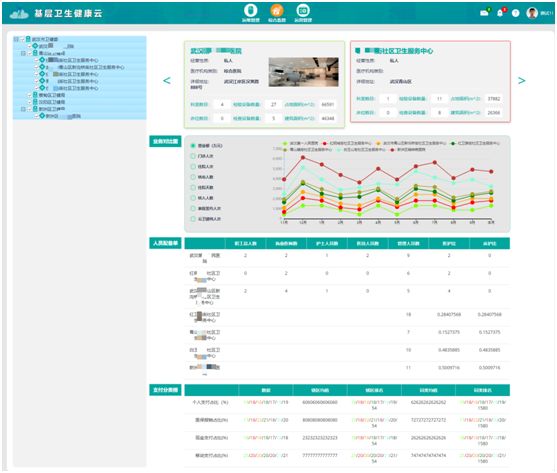

基于云计算技术的B/S架构的医院管理系统(简称云HIS),采用前后端分离架构,前端由Angular语言、JavaScript开发;后端使用Java语言开发。系统遵循服务化、模块化原则开发,具有强大的可扩展性,二次开发方便快捷。为医疗机构提供标准化的、信息化的、高效可靠的医疗信息管理系统,实现医患事务管理和临床诊疗管理等标准医疗管理信息系统的功能。有效实现协同门诊、住院、药房药库管理、双向转诊转检、电子病历、远程会诊诊断及医疗数据共享与交换,解决数据重复采集及信息孤岛等问题,为实现区域协同医疗卫生信息化平台奠定了基础。

功能包括门诊、住院、收费、电子病历、药品药房、药库、财务、统计等模块,支持医保接口。

功能模块:

一、【挂号与预约系统:】

实现了医院门诊部挂号处所需的各种功能,包括门诊安排的管理,号表的生成及维护,门诊预约管理和挂号处理,同时提供了病人信息的查询和有关挂号工作的统计功能。支持预约、限量、不限量、分时挂号。

(功能主要包括:门诊安排、挂号处理、统计与查询等。)

二、【划价收费系统:】

本系统集划价收费功能于一体,费别及收费系数的自定义能力,灵活多样的输入方法,简单易学,允许项目在价表中不存在时手工划价,与门诊药房的库存关联,无药报警,集中统一的价表管理,支持医院“一卡通”,集成医疗保险收费项目控制,费用自动分比例,费用按医疗保险政策分段统计等。

(功能主要包括:划价收费、结帐处理、收据查询、退费处理、日报表等。)

三、【门诊药房系统:】

门诊药房管理系统是医院门诊处方药品的发放中心。可以根据药房的不同类别分:中药房、西药房、中成药等不同药房。药房与药库连用,直接从药库出库转药房入库,与门诊收费连接直接显示划价处方药品列表。可对患者处方查询;可对任意时间段的发药量查询;可对午间时间段的发药患者查询。

(功能主要包括:处方管理、药品出、入库管理、库存管理、药房盘点门诊发药、付药统计、统计查询、发药患者查询统计、对发药、报损、退药、退货任意时间段的动态查询,药品效期、药品数量上、下限控制。)

四、【门诊医生工作站系统:】

门诊医生站系统操作简便,符合现代医院临床要求,并提供大量的医嘱词典数据,以及各种常用医嘱用语(如处方、检验、化疗、重患者等)均已设好了数据库,并随医师的使用频率,系统智能地重新排序,即方便了医生的查询,有效地提高了护理工作效率和准确性。

(功能主要包括:门诊记录、健康档案、电子处方、电子检验单、电子帐单、电子医嘱等。)

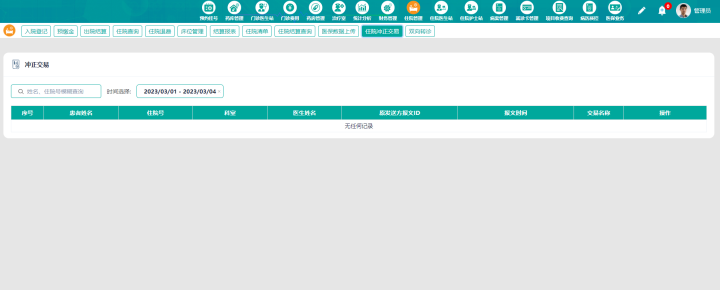

五、【住院管理系统:】

住院处管理包括入院登记、预交款管理、出院管理,提供了两种结算方式,即正常出院结算与中途费用结算。中途费用结算是当患者住院有一定的时间后,为了简化管理而对病人费用进行结算。医院根据病人要求,可在任意时间对病人进行中途结算。

(功能主要包括:住院登记、押金管理、住院情况查询、病历号替换、患者费用查询、中结帐单、出院结算、医嘱查询打印、费用查询打印、收入科室核算、数据维护等。)

六、【住院医生站系统:】

住院医生站管理系统是整个住院部分的中心所在,操作简单合现代医院临床工作需要。根据住院处的特殊情况,提供不同医嘱录入方式:快捷录入、标准录入、事后录入。所有的医用字典根据使用频率,实现智能排列,有效提高医生的工作效率更好地为患者服务。

(功能主要包括:患者医嘱录入、医嘱审核、医嘱终止、重整医嘱、医嘱查询、患者病历首页查询,转科、出院等。)

七、【住院护士站系统:】

住院护士站管理系统是整个住院部分的中心所在,它可实现病房的床位分级管理、医嘱校对、医嘱的执行,病人在住院期间的信息管理、病房分类管理、对病房、患者信息、患者费用等相关信息的查询。

(功能主要包括:进行患者入病房指定床位、交换床位、医嘱执行、终止以及处方摆药、摆药查询、转科、出院申请等。完成护士的日常工作。)

八、【住院药房系统:】

住院药房管理系统是住院处患者处方的摆药中心。可以根据药房的不同类别分:中药房、西药房、中成药等不同药房。药房与药库连用,直接从药库出库转药房入库,与住院护士医生站联接,直接显示划价处方药品列表,对患者的处方进行医嘱摆药、查询等。

(功能主要包括:处方管理、药品出、入库管理、库存管理、药房盘点、病区摆药、付药统计、统计查询,对发药、报损、退药、退货任意时间段的动态查询。药品效期、药品数量上、下限控制。)

九、【药库管理系统:】

药库管理系统是医院药品的管理中心。实现对药品的计划、采购、入库、出库的科学管理。对药品基本信息、数量、保质期的实时管理;对药品的库存盘点、销售金额的动态查询。

(功能主要包括:采购计划、采购结算、业务分析、库房进库动态查询、药库库存动态查询、药品零售动态查询、库房报损动态查询、系统维护等。)

十、【电子病历管理系统:】

本电子病历系统主要面向医疗机构医生、护士,提供对住院病人的电子病历书写、保存、修改、打印等功能。基于云端SaaS服务方式,通过浏览器方式访问和使用系统功能,提供电子病历在线制作、管理和使用的一体化电子病历解决方案。

(功能包括:

1、模板制作:样式控制、数据储存、图片管理、控件管理、表格管理、页面布局、打印设置

2、病历书写:样式控制、病历质控、个人模板、控件输入、数据同步、病历打印、辅助输入)

十一、【医疗卡管理系统:】

医院“一卡通”管理系统实现持卡收费、结算,直接在药房刷卡取药,代替病历号使用,可减少排队,提高医院服务质量,提高工作效率。本系统可在医院各个科室进行缴费、就诊和结算等。

(功能主要包括:患者建卡、预存现金、费用查询、费用统计汇总、医疗卡的挂失、换卡、注销以及维护,并可在医院各科室进行就诊等)

十二、【病案管理系统:】

病案管理系统是病人案例资料的信息库,它不但真实、准确地反映了患者病情诊断、治疗、护理、化验等全面的信息,也是医院及医师人员医疗水平、医疗效果的真实体现,医疗科研的宝贵资料。本系统管理全面,统计细致,信息网上共享,调用查阅及时。

(功能主要包括:患者病案编辑、病案查询、病案统计、治疗记录查询、疾病分类查询、病历维护、治疗评价、病案借阅、信息综合检索、报表打印)

十三、【财务管理系统:】

财务管理系统是医院经济核算的中心,连接门诊与住院的各个子系统,并对其自动生成各种报表;生成财务明细帐、分类帐,提供日、月、年统计报表;打印平衡表;按部门、科室、医生、收费员进行分别统计等。